The concept of an AI safety monitoring software MVP for operational intelligence is becoming a priority for modern engineering teams. Building this foundation helps startups track model performance and mitigate risks before they impact users. This guide covers the essential steps for product managers to gain visibility into their AI systems.

Setting the Foundation for Model Safety

Startups often rush to market with new AI features without a clear plan for long term stability. This leads to unexpected behavior and lost customer trust. Developing an AI safety monitoring software MVP for operational intelligence is the most effective way to prevent these issues. You should not wait until you have a massive user base to think about safety. The most successful founders integrate these systems from day one. Many startups miss this and end up spending months fixing reputation damage that could have been avoided. A good monitoring tool tracks model drift and identifies when your outputs start to diverge from your expectations. It provides the data needed to make quick adjustments to your prompts or your training sets. You need a system that alerts you to hallucinations before your customers report them. This is not about building a perfect solution immediately. It is about creating a visibility layer that grows with your application. Focus on the most common edge cases for your specific niche. If you are building for the legal industry, focus on factual accuracy. If you are in creative writing, focus on tone consistency. This tailored approach ensures that your initial version provides immediate value. It also keeps your development costs low while you prove the core concept to stakeholders. By focusing on high impact risks, you demonstrate a commitment to quality that resonates with early adopters. Operational intelligence starts with knowing where your model is vulnerable and taking proactive steps to guard those areas. This foundation allows for faster iteration and more confident scaling.

Core Technical Requirements for Your MVP

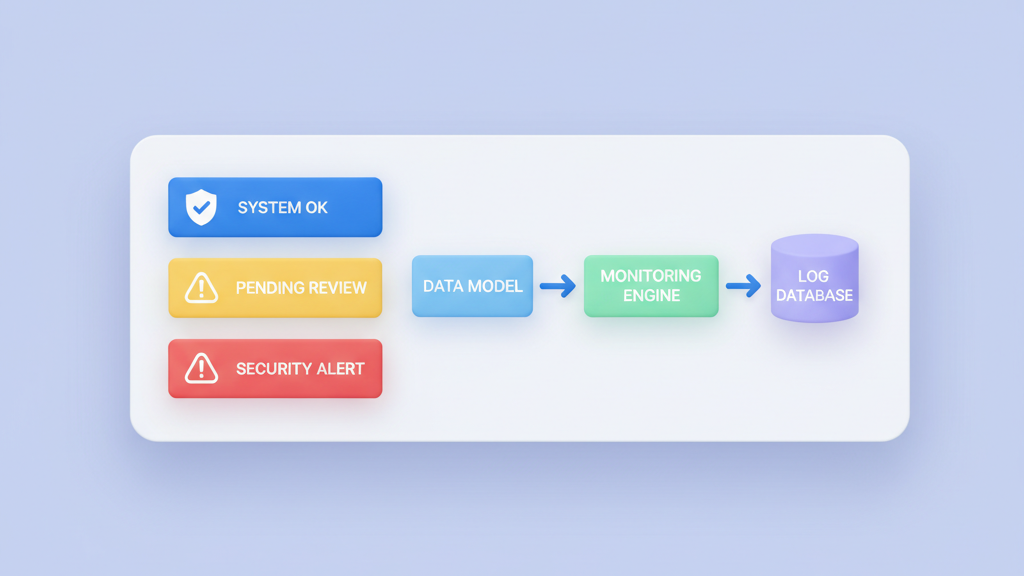

Your initial technical roadmap should prioritize reliability and integration ease. A complex monitoring system that slows down your main app will never be adopted. We recommend a middleware approach where the safety checks run in parallel with the main inference call. This setup minimizes the impact on the end user experience while still capturing all necessary data. You should define a set of core metrics that reflect the health of your AI. These metrics will form the basis of your operational intelligence. Start with simple checks that do not require their own heavy models. For example, you can use keyword filtering or sentence similarity scores to flag potential issues. As your system matures, you can add more advanced classifiers for sentiment or bias. The goal for your first version is to create a reliable feedback loop. This loop should connect your production environment to your engineering team. This ensures that every failure becomes a learning opportunity for your next release. You can use these insights to refine your prompts or update your model weights. A transparent system allows developers to see exactly why a specific output was flagged. This reduces friction between the safety team and the product team. Everyone should be working toward the same goal of a reliable user experience. We suggest including these core components:

- Real time input sanitization filters

- Output validation against predefined safety rules

- Automated alerts for high severity model failures

- A centralized log for all anomalous model interactions

Data Architecture and Storage Strategy

Data storage strategy is a frequent point of failure for early stage teams. You might be tempted to save every single interaction for future analysis. This can quickly lead to high costs and messy databases. Your AI safety monitoring software MVP for operational intelligence should be more selective. Focus on storing the interactions that fall outside of your safety boundaries. These are the samples that provide the most value for retraining your models. You should also consider the latency requirements of your monitoring pipeline. If your checks take too long, your users will notice a lag in responses. This is why we suggest using asynchronous processing for non critical checks. Only the most vital safety filters should be part of the synchronous path. This balance keeps your application fast while still maintaining a high safety standard. Many teams spend too much time on the dashboard and not enough on the data pipeline. A pretty chart is useless if the data behind it is incomplete or delayed. Invest in a solid data foundation first. This will make it much easier to scale your monitoring capabilities as your traffic increases. You can always add more visualizations later as your business needs change. A robust data layer also supports more advanced auditing in the future. It is easier to build a compliance report when your data is already organized and categorized. The right architecture allows you to grow without constant redesigns.

Visualizing Operational Intelligence

The monitoring dashboard serves as the nerve center for your product operations. It should provide a clear view of both safety risks and operational efficiency. Product managers need to see how often safety filters are triggered compared to the total number of requests. High trigger rates might indicate that your model is being tested by malicious users or that your prompts are too restrictive. Low trigger rates could mean that your filters are not sensitive enough. You need to find the right balance for your specific application. The dashboard should also highlight performance trends over time. If you notice a sudden spike in hallucinations after a new deployment, you can quickly roll back the changes. This ability to react fast is a core part of operational intelligence. It prevents small issues from turning into major crises. A clean and intuitive layout is essential for quick decision making. Do not clutter the screen with unnecessary metrics that do not lead to action. Focus on the numbers that actually change how you run your business. We suggest your dashboard includes these visualizations:

- Daily safety violation trends by category

- Latency distribution for safety check execution

- Model accuracy rates based on human feedback samples

- Distribution of input topics to identify user patterns

- Geographical heatmaps of where requests originate

Privacy and Security Considerations

Security and privacy are not just check boxes for your compliance team. They are fundamental parts of building a trustworthy AI product. Your monitoring software will handle a lot of data. You must implement strict encryption for data at rest and in transit. This is especially important if you are operating in regulated industries like finance or healthcare. Startups that ignore these requirements often find themselves blocked by enterprise procurement teams. Large companies will not touch your software if you cannot prove that their data is safe. You should also implement a clear data retention policy. Do not keep data longer than you need it. This reduces your liability and lowers your storage costs. Many founders think that more data is always better. In the world of safety and compliance, unnecessary data is a risk. Be intentional about what you collect and how long you store it. This approach shows your customers that you take their privacy seriously. It also makes your system easier to manage and audit. Trust is a competitive advantage that is hard to build but easy to lose. By making safety a core part of your brand, you differentiate yourself from competitors who treat it as an afterthought. Use these principles to build a defensible and ethical AI business.

Scaling and Future Evolution

Looking ahead, your monitoring strategy must adapt to a changing landscape. The types of risks associated with AI are constantly evolving. New vulnerabilities like indirect prompt injection are being discovered regularly. Your system needs to be flexible enough to incorporate new checks as these threats emerge. You should also think about how you will monitor multiple models at once. Most mature AI apps do not rely on a single model. They use a mix of large models for complex tasks and smaller models for specific functions. Your monitoring tool should give you a unified view of safety across all these components. This cross model visibility is essential for deep operational intelligence. It allows you to see how changes in one part of your system affect the overall safety profile. Start building these capabilities into your roadmap now. The field of AI safety is moving fast. Staying informed about the latest research is part of your operational responsibility. You should designate a team member to keep track of new safety benchmarks and testing tools. This ensures that your MVP stays relevant even as the underlying technology shifts. A flexible approach allows you to pivot your strategy based on real world performance. We recommend focusing on these long term goals:

- Multi model support for heterogeneous AI environments

- Integration with external threat intelligence feeds

- Automated model retraining triggers based on safety drift

- Advanced behavioral analysis of user interactions